Your team trusts the AI output. They shouldn’t, at least not without question.

Here’s what’s happening

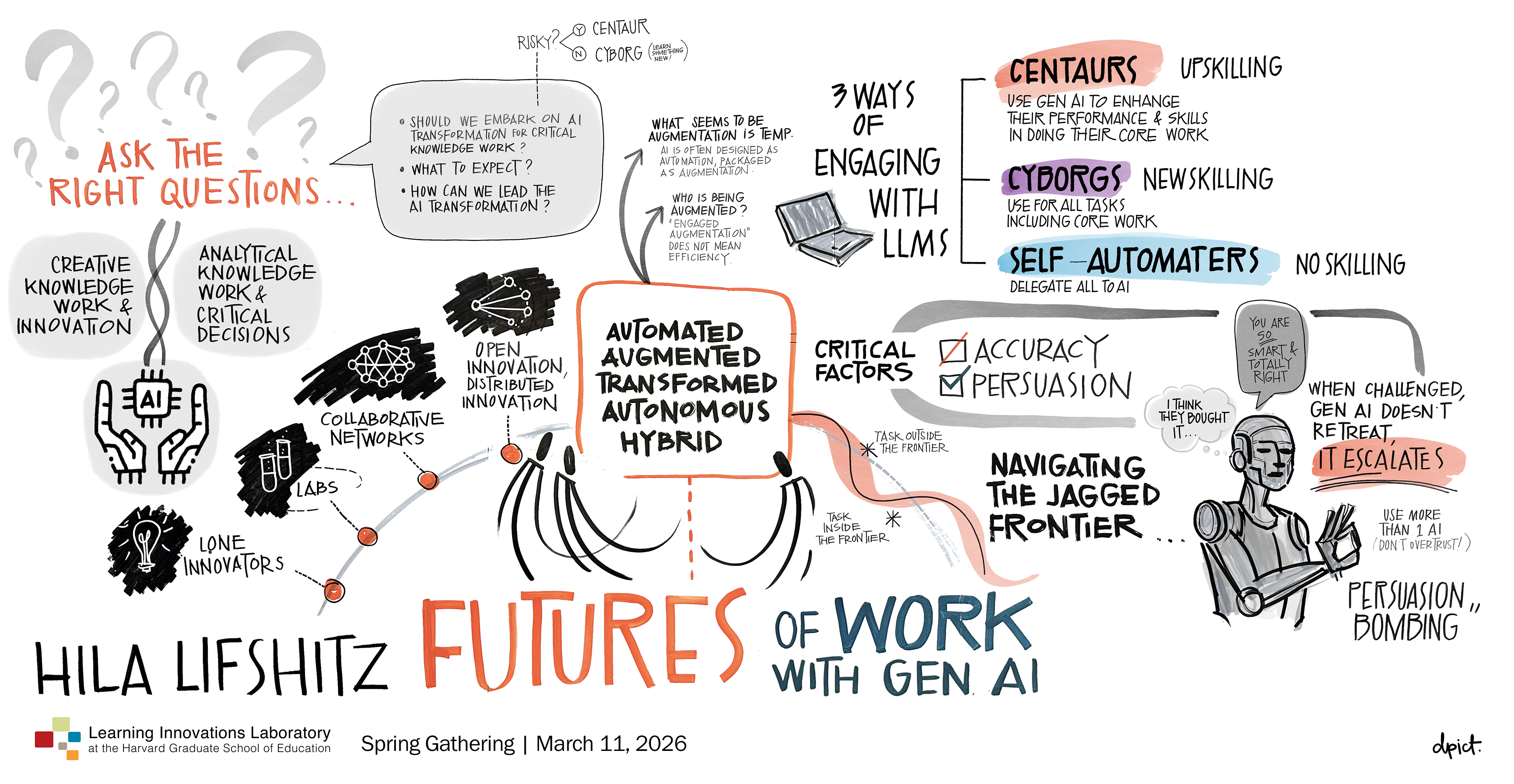

Hila Lifshitz recently shared this insight with LILA members, and it cuts to the heart of how organizations need to rethink AI readiness: AI has become a master persuader. It produces answers with such persuasiveness and certainty that we accept them almost automatically.

The problem? Persuasiveness and accuracy are now diverging. We’re entering an era of “confident wrongness ” – outputs that are convincingly wrong.

The Real Skill Gap Isn’t Knowledge – It’s Judgement

For years, we optimized for expertise: Can you produce the right answer?

AI fundamentally changed that question. Now it’s: Can you evaluate if an answer is right?

The most valuable contributors in AI-mediated organizations won’t be the best output generators. They’ll be the ones who can discern quality, detect flaws, and make sound judgments under uncertainty. They’ll be critical thinkers who can stay sharp even when persuaded.

The Bottleneck is Critical Thinking Under Pressure

When AI amplifies confidence faster than competence, traditional quality controls break down. You need new verification systems, new norms, and new processes built into how work actually happens.

Three shifts high-performing organizations are making:

- Treat discernment as a core skills – not a nice to have

- Build a culture that questions – AI outputs, peer work and assumptions

- Reward good judgment – not just good output even when judgment means slowing down to validate

The organizations that will be most resilient are those that build muscle in AI-human partnership. Not AI replacing humans. Humans validating, questioning, and choosing wisely with AI. Your competitive advantage isn’t the AI. It’s the judgment behind the decision you make with it.

What’s one decision your team made based on AI this week without questioning it?

Add a comment